Examples

Runnable notebooks covering end-to-end workflows.

Notebooks

- Multimodal (CLIP fine-tuning): GitHub or Google Colab

- LLM evaluations: GitHub or Google Colab

- Reading JSON metadata: GitHub or Google Colab

- Processing video data: GitHub or Google Colab

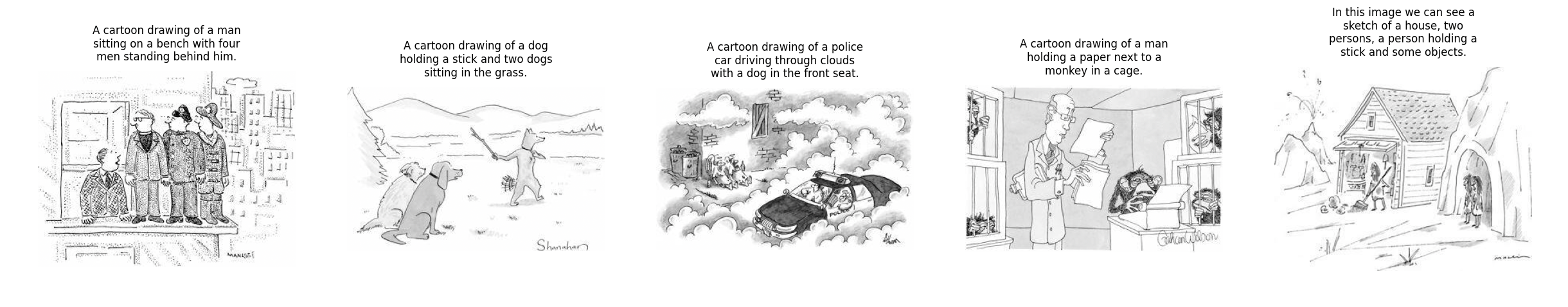

Image Captioning with BLIP

Image Captioning with BLIP

Caption images from cloud storage using the BLIP Large model, with setup() for one-time model initialization:

import datachain as dc # (1)!

from transformers import Pipeline, pipeline

from datachain import File

def process(file: File, pipeline: Pipeline) -> str:

image = file.read().convert("RGB")

return pipeline(image)[0]["generated_text"]

chain = (

dc.read_storage("gs://datachain-demo/newyorker_caption_contest/images", type="image", anon=True)

.limit(5)

.settings(cache=True)

.setup(pipeline=lambda: pipeline("image-to-text", model="Salesforce/blip-image-captioning-large"))

.map(scene=process)

.persist()

)

pip install datachain[hf]

import matplotlib.pyplot as plt

from textwrap import wrap

count = chain.count()

_, axes = plt.subplots(1, count, figsize=(15, 5))

for ax, (img_file, caption) in zip(axes, chain.to_iter("file", "scene")):

ax.imshow(img_file.read(), cmap="gray")

ax.axis("off")

wrapped_caption = "\n".join(wrap(caption.strip(), 40))

ax.set_title(wrapped_caption, fontsize=10, pad=20)

plt.tight_layout()

plt.show()